- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

This is fucking hilarious. Especially the suit of armor. It feels like such a lazy fix

thanks that’s a big collection of ethically ambiguous memes ^^

Homer? Who is Homer? My name is “Ethinically Ambigaus”

Well, this isn’t the first time ai became racist.

It’s not even the first time AI owned majorly by Microsoft became racist

That got a chuckle out of me

Man I forgot about Tay. Time to go find those threads again.

I accidentally got Dall-E to be racist. I’m on a forum where we do endless AI Godzilla pictures (don’t ask) and I did “Godzilla crosses the border illegally” hoping for some sort of spy thing. Instead, I got Godzilla in a sombrero by a Trump-like border wall.

LOL! I really wanna see this!

It doesn’t start with Godzilla. Godzilla slowly took over. So it’s better to start at the bottom.

This is great

It’s an MST3K forum, so it’s not as deep a cut as you would think. But we have fun.

Ah, okay, makes sense. I didn’t notice any other obvious MST3K-isms in there.

Depends on the thread in the forum. That one doesn’t reference it that much, although we always do Earth vs. Soup as a test when a new AI comes out.

deleted by creator

Real “I don’t notice your skin color at all!” vibes.

Was the AI trained on SRD

Yeah why not just expand the dataset it draws from to be less racially biased?

Ah, right, that would require effort.I think this is more likely some bizarre attempt at making the AI anti-racist.

But you can’t argue against it unless you want to be called racist.

I’ve been encountering this! I thought it was the topics I was using as prompts somehow being bad – it was making some of my podcast sketches look stupidly racist, admittedly though some of them it seemed to style after some not-so-savoury podcasters, which made things worse.

Have you tried coming up with sketches instead of asking AI bots to do it for you?

Gah English.

“My sketches” as in “me using the AI software to draw pictures”. It’s not my podcast, I was trying to guess at what the presenters looked like based off the topics they discuss.

Haha, no worries. English is certainly a funny language.

Not sure why people are downvoting you :| If you misunderstood me then others will too, it’s useful having reply chains like this.

Bromer Samson

Bromine Saturation

I think I just found the name for my Tav in my upcoming Durge run of Baldurs Gate 3.

“Ron stood there with his Ron shirt.”

“Ron’s Ron shirt was almost as bad as Ron himself.”

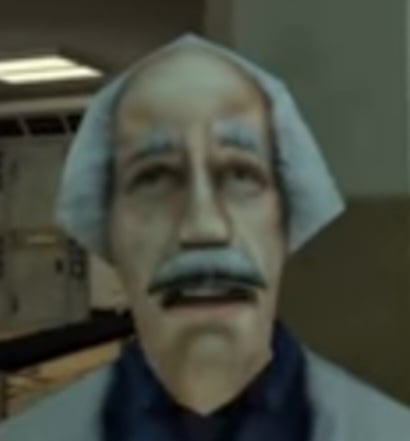

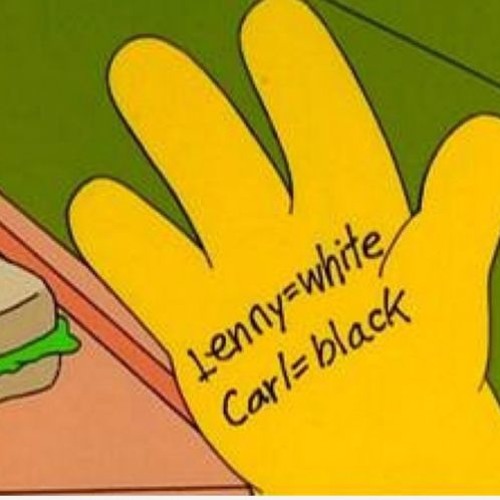

hand = white (yellow)

face = black

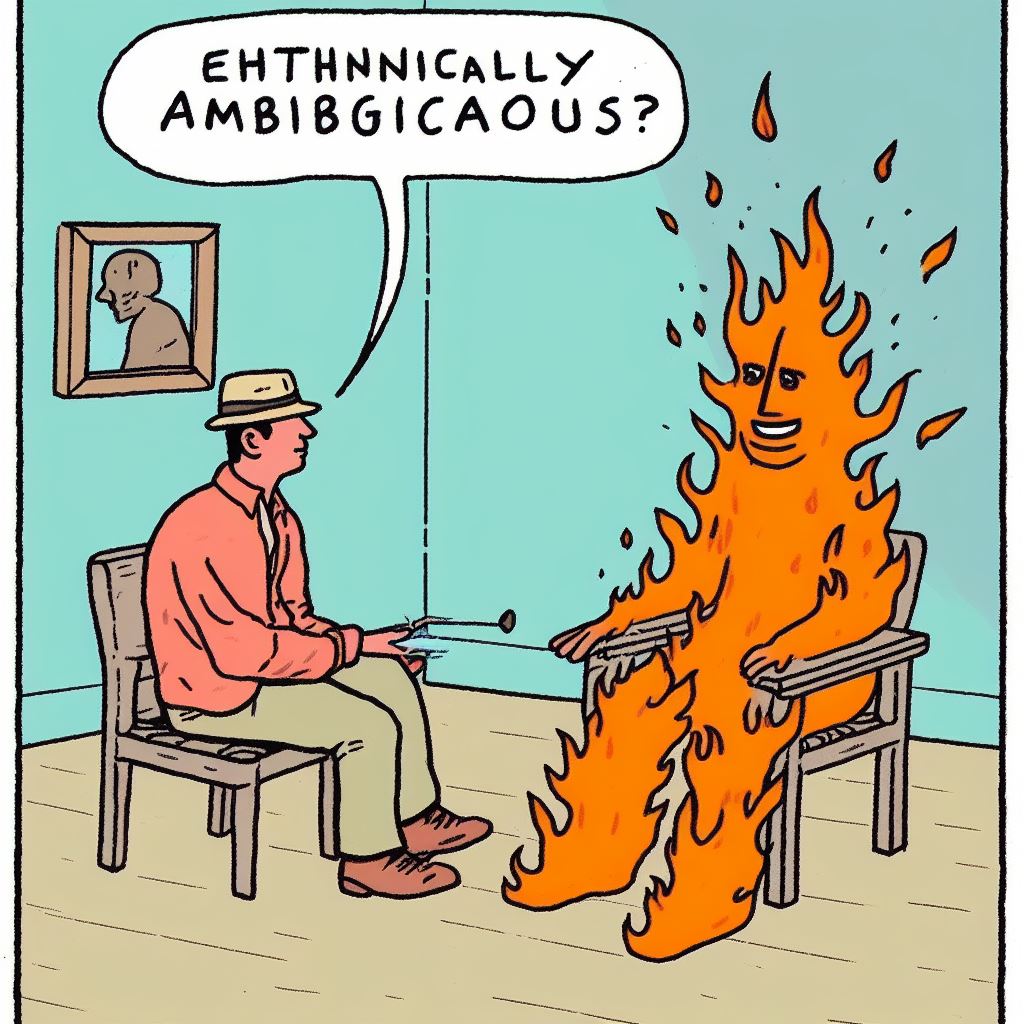

Word, here’s a prompt with dalle3 that also said something similar.

Was this your prompt? This seems to happen a lot then

It wasn’t in the prompt. I actually asked for him to be telling the therapist “I just want to be put out”.

deleted by creator

What’s up with her username? Sounds like an AI trying to lurk among us

who?

The X reposter’s name.

Ethnically ambiguous guy duo!!!

deleted by creator

The number of fingers and thumbs tells me this maybe isn’t an AI image.

Look at the hands in the upper corners, looks pretty AI-ish.

Yeah, those thumbs are not proportional to the wrist. And now I look again, bottom right and bottom left have an extra nub.

Nah, AI has just gotten really good at hands. They can still be a bit wonky, but I’ve seen images, hands and everything, that’d probably fool anyone who doesn’t go over the picture with a magnifying glass.

Imagine the tons of hands pictures they fed ai models just to combat that

Imagine the sheer number of fingers

More hands than your mum took.

Sorry, I couldn’t resist.

That explanation makes no fucking sense and makes them look like they know fuck all about AI training.

The output keywords have nothing to do with the training data. If the model in use has fuck all BME training data, it will struggle to draw a BME regardless of what key words are used.

And any AI person training their algorithms on AI generated data is liable to get fired. That is a big no-no. Not only does it not provide any new information from the data, it also amplifies the mistakes made by the AI.

They are not talking about the training process, to combat racial bias on the training process, they insert words on the prompt, like for example “racially ambiguous”. For some reason, this time the AI weighted the inserted promt too much that it made Homer from the Caribbean.

They are not talking about the training process

They literally say they do this “to combat the racial bias in its training data”

to combat racial bias on the training process, they insert words on the prompt, like for example “racially ambiguous”.

And like I said, this makes no fucking sense.

If your training processes, specifically your training data, has biases, inserting key words does not fix that issue. It literally does nothing to actually combat it. It might hide issues if the data model has sufficient training to do the job with the inserted key words, but that is not a fix, nor combating the issue. It is a cheap hack that does not address the underlying training issues.

but that is not a fix

congratulations you stumbled upon the reason this is a bad idea all by yourself

all it took was a bit of actually-reading-the-original-post

?

My position was always that this is a bad idea.

the point of the original post is that artificially fixing a bias in training data post-training is a bad idea because it ends up in weird scenarios like this one

your comment is saying that the original post is dumb and betrays a lack of knowledge because artificially fixing a bias in training data post-training would obviously only result in weird scenarios like this one

i don’t know what your aim is here

You started your initial rant based on a misunderstanding of what was actually said. Stumbling into the correct answer != knowing what you’re reacting to

Yes. The training data has a bias, and they are using a cheap hack (prompt manipulation) to try to patch it.

Any training data almost certainly has biases. For awhile, if you asked for pictures of people eating waffles or fried chicken they’d very likely be black.

Most of the pictures I tried of kid-type characters were blue eyed.

Then people review the output and say "hey this might still racist, so they tweak things to “diversity” the output. This is likely the result of that, where they’ve “fixed” one “problem” and created another.

Behold, Homer in brownface. D’oh!

So the issue is not that they don’t have diverse training data, the issue is that not all things get equal representation. So their trained model will have biases to produce a white person when you ask generically for a “person”. To prevent it from always spitting out a white person when someone prompts the model for a generic person, they inject additional words into the prompt, like “racially ambiguous”. Therefore it occasionally encourages/forces more diversity in the results. The issue is that these models are too complex for these kinds of approaches to work seamlessly.

There are 2 problems with not having enough diversity in training data:

-

The AI will be worse at depicting diversity when prompted, eg. If the AI hasn’t seen enough pictures of black people it may not be able to depict black hair properly as it doesn’t “know what it looks like”

-

The AI will not show as much diversity when not prompted. The AI is working off statistics so if you tell it to depict a person and most of the people it’s “seen” are white men it will almost always depict a white man because that’s statistically what a person is according to its data.

This method combats the second problem, but not the first. The first can mostly be solved by generally scaling the training data though, which is mostly what these companies have been doing. Even if only 1% of your images are of POC, if you have 1b images 10mil will be of POC which may be enough to train it. The second problem would remain unsolved though since the AI will always go with the statistically safe 99%.

-

any AI person training their algorithms on AI generated data is liable to get fired

though this isn’t pertinent to the post in question, training AI (and by AI I presume you mean neural networks, since there’s a fairly important distinction) on AI-generated data is absolutely a part of machine learning.

some of the most famous neural networks out there are trained on data that they’ve generated themselves -> e.g., AlphaGo Zero

They could try to compensate the imbalance by explicitly asking for the lesser represented classes in the data… It’s an idea, not quite bad but not quite good either because of the problems you mentioned.