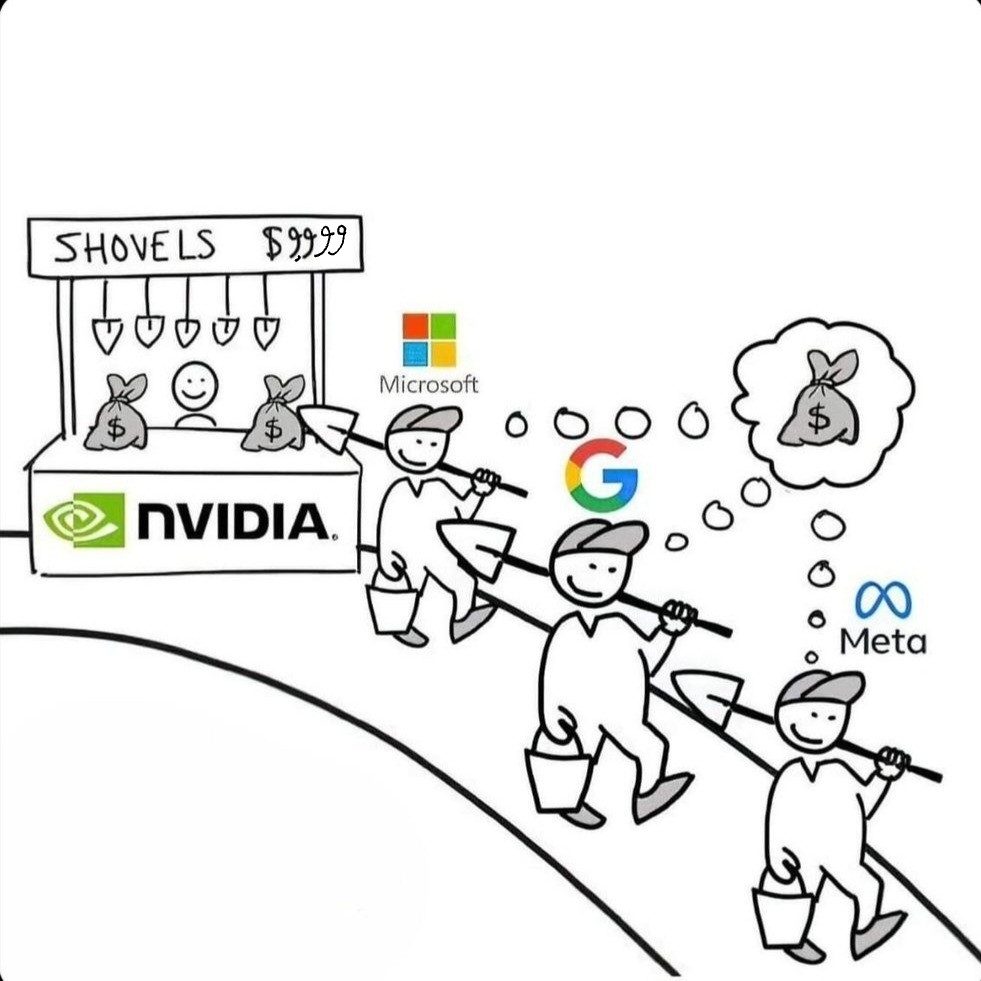

Now where is the shovel head maker, TSMC?

And then China popping their head out claiming Taiwan is part of China because they want to seize TSMC

deleted by creator

Those things aren’t mutually exclusive. Yes, they are dumping massive resources into SMIC. Yes, they also want to maintain imperialism over Taiwan, and TSMC is a part of that. Some of it is fear-mongering sure, but China is consistently confrontational in the South China Sea and beyond. There’s a reason they enforce an abrasive naval presence there and continue to press against the Philippines.

https://www.ft.com/content/b4ee2e18-3256-4371-8369-9a3118959fca

they also want to maintain imperialism over Taiwan

Not to deny the realities of the tensions there, but liberals are relatively loose with term imperialism. There is a difference between an imperialist state like the US and an anti-imperialist — and until recently imperialized — state like China.

China is consistently confrontational in the South China Sea and beyond

Yeah why so confrontational, China?

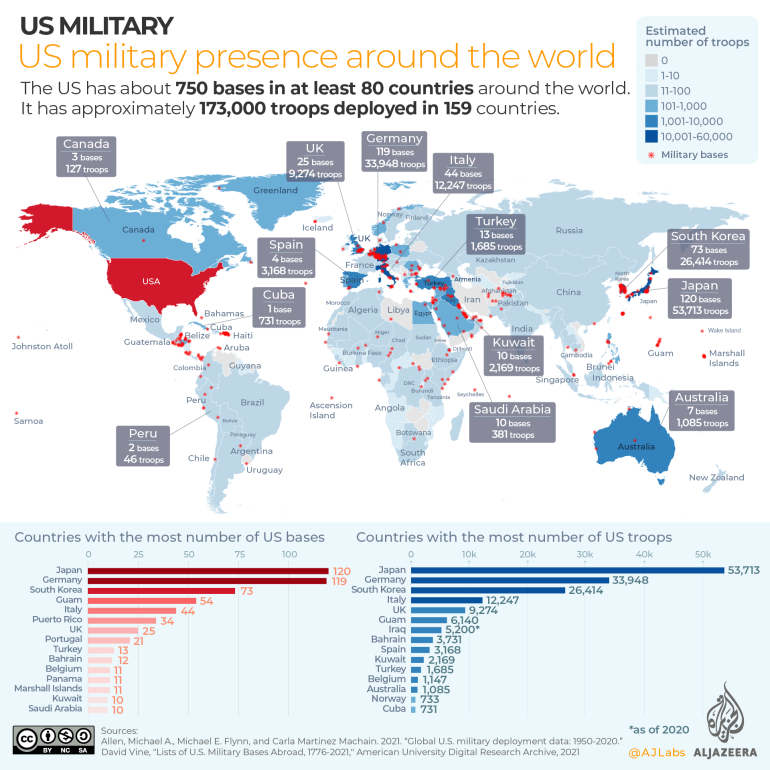

Foreign Policy, 2013: Surrounded: How the U.S. Is Encircling China with Military Bases. And that article is a decade old; it’s only gotten worse.

The US has over 750 overseas military bases around the world, and is building more to further encircle China. Meanwhile China has one anti-piracy base in Djibouti.

Buddy, that’s literally what US state department claims on their official website:

The US state department doesn’t decide which countries own or control which territory, now does it? How exactly can you say territory you don’t control (neither legally nor militarily) and likely will never control is part of your own country? Furthermore, why would the US risk ruining trade relations with China over unnecessarily pointing out reality, when it doesn’t benefit the US to recognize Taiwanese independence?

the us has literally asserted taiwan is part of china for decades now.

under the kissinger term, no less.

This is also the position of the UN, and vast majority of countries in the world. Taiwan is part of China, get over it.

I’ll say it again: Why would countries risk ruining trade relations with China, one of the three most important trade powers internationally, over unnecessarily pointing out reality and thus contradicting China? And how can you seriously say territory a country doesn’t control in any capacity at all theirs? Why do you think a majority of world powers are independently trading with Taiwan if Taiwan isn’t independent from China?

Don’t you think China would, you know, not be constantly complaining about not having control over Taiwan for the past few decades and making bluffs about invading if Taiwan were already part of China? That’s a pretty obvious sign that “no, China doesn’t own Taiwan in any capacity”.

What you’re doing here is called sophistry. Taiwan being part of China is a fact that’s recognized by international law. It’s really that simple. The reality is that China could remove US sponsored regime in the rogue province any time they want. However, they realize that it’s much better to remove burgerland influence in a peaceful way, and that’s what will happen. It’s incredible how people have trouble grasping such basic things.

edit: I aboslutely love how utterly enraged lemmy radlibs get when faced with reality

What you’re doing here is called sophistry. Taiwan being part of China is a fact that’s recognized by international law.

Tell me you have no idea how the UN works without explicitly saying so. A majority of countries not recognizing Taiwan doesn’t mean it’s “international law” that Taiwan isn’t independent.

It’s really that simple. The reality is that China could remove US sponsored regime in the rogue province any time they want.

LMAO this is such a cope. Yeah I’m sure the extremely aggressive all-bark-no-bite and constant “you better not do <x diplomacy with Taiwan or military action in Taiwanese strait/South China Sea> again or we’ll do something about it, I swear!” empire is suuuper capable of taking Taiwan. They know if they tried full-out war against the US or its allies (Taiwan), the US navy would cut off their international trade and turn their country upside down – it’s why they’re trying so hard (and failing) to seize full control of the South China Sea.

However, they realize that it’s much better to remove burgerland influence in a peaceful way, and that’s what will happen.

Again, absolute cope. They’ve been at it for over 75 years and haven’t made any progress, considering Taiwanese have developed significantly more national identity and even more people in Taiwan support the country participating in international relations under the name “Taiwan” (80%) and consider themselves primarily Taiwanese (90%), and only 6% consider themselves more Chinese than Taiwanese (more people considered themselves primarily Chinese many decades ago but that has long since dwindled).

It’s incredible how people have trouble grasping such basic things.

It’s incredible how you have trouble grasping the situation and think China is going to “peacefully” absorb Taiwan when Taiwan is farther from China than ever in terms of national identity and international participation.

Several polls have indicated an increase in support of Taiwanese independence in the three decades after 1990. In a Taiwanese Public Opinion Foundation poll conducted in June 2020, 54% of respondents supported de jure independence for Taiwan, 23.4% preferred maintaining the status quo, 12.5% favored unification with China, and 10% did not hold any particular view on the matter. This represented the highest level of support for Taiwanese independence since the survey was first conducted in 1991. A later TPOF poll in 2022 showed similar results.

has been part of china for 2000 years, anglo imperialism wont change that

Pretty sure China has actually been a part of Taiwan for 2000 years

You have an island governed by a democratically elected government, with a population that from what I remember mostly doesn’t want to be assimilated into the PRC. The PRC taking it by force would, in my eyes, be rather imperialistic.

democratically elected? arguable and only for the last few decades at that. it was run as a brutal single party dictatorship backed by amerikkka until fairly recently. And last time i checked the vast majority of people in Taiwan want to maintain the status quo which is that Taiwan is part of China.

Yeah, fuck the KMT. But as you have recognised, they aren’t a dictatorship anymore.

And the status quo is that they are de facto a small independent island nation, that is de jure claiming mainland China.

It just writes itself lmao

the angry wasp nest has spoken

Tankees. Tankees everywhere.

Don’t forget AMD, good potential if they bring out similar technology to compete with NVIDIA. Less so Intel, but they’re in the GPU market too.

Does ARM do anything special with AI? Or is that just the actual chip manufacturers designing that themselves?

I think its largely the chip manufacturers, but ARM is still making money on licensing fees for Nvidia’s new ai chip (with an integrated 72 core arm cpu) for example

ARM is in the perfect place where, if a company using their architecture succeeds, they get tons of money, and if the company fails, they lose nothing.

As I understand it, ARM chips are much more efficient on the same tasks, so they’re cheaper to run.

Don’t forget Qualcomm either.

Edited the price to something more nvidiaish:

Gotta add a few more 9s to that. This is enterprise cards we’re talking about

Literally about to do same.

Jensen also is obsessed with how much stuff weighs. So maybe he’d sell shovels by the ton.

Nobody expects the “4 elephants” GPU.

Here is an alternative Piped link(s):

https://www.piped.video/watch?v=ugd61cUHbME

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

Worst one is probably Apple. They just announced “Apple Intelligence” which is just ChatGTP whose largest shareholder is Microsoft. Figure that one out.

Well, most of the requests are handled on device with their own models. If it’s going to ChatGPT for something it will ask for permission and then use ChatGPT.

So the Apple Intelligence isn’t all ChatGPT. I think this deserves to be mentioned as a lot of the processing will be on device.

Also, I believe part of the deal is ChatGPT can save nothing and Apple are anonymising the requests too.

Well, most of the requests are handled on device

Doubt.

Voice recognition, image recognition, yes. But actual questions will go to Apple servers.

Doubt.

Is this conjecture or can you provide some further reading, in the interest of not spreading misinformation.

Edit: I decided to read the info from Apple.

With Private Cloud Compute, Apple sets a new standard for privacy in AI, with the ability to flex and scale computational capacity between on-device processing, and larger, server-based models that run on dedicated Apple silicon servers. When requests are routed to Private Cloud Compute, data is not stored or made accessible to Apple and is only used to fulfill the user’s requests, and independent experts can verify this privacy.

Additionally, access to ChatGPT is integrated into Siri and systemwide Writing Tools across Apple’s platforms, allowing users to access its expertise — as well as its image- and document-understanding capabilities — without needing to jump between tools.

Say what you will about Apple, but privacy isn’t a concern for me. Perhaps, some independent experts will verify this in time.

Which is exactly what I said. It’s not local.

That they are keeping the data you send private is irrelevant to the OP claim that the AI model answering questions is local.

chatgpt won’t save anything? Doubtful.

Brother I do not care about your doubts.

I want hard facts here.

Do you think that if you enter into a contract with a company like Apple they’ll just be like, aww shit they weren’t supposed to do that. Anyway let’s carry on.

No. This would open OpenAi up to potential lawsuits.

Even if they did save stuff. It gets anonymised by Apple before even being sent to ChatGPT servers.

thing is apple doesnt give a shit about ur privacy

The hard fact is OpenAI is already exposing itself to lawsuits by training on copyrighted material.

So the proof here should be “what makes them trustworthy this time?”

There’s kind of a difference between “we scraped the internet and decided to use copyrighted content anyways because we decided to interpret copyright law as not being applicable to the content we generate using copyrighted content” (omegalul) and “we explicitly agreed to a legally-binding contract with Apple stating we won’t do that”.

If you think that’s the WORST ONE, you have no idea about any of this

That’s just not true. Most requests are handled on-device. If the system decides a request should go to ChatGPT, the user is promped to agree and no data is stored on OpenAI’s servers. Plus, all of this is opt-in.

Most requests are handled on-device.

Literally impossible.

“Hey Siri, what’s the weather forecast for tomorrow.”

< The Farmer’s Almanac that is in my local model says it will rain tomorrow. >

I think there’s a larger picture at play here that is being missed.

Getting the weather is a standard feature for years now. Nothing AI about it.

What is “AI” is,

Hey Siri, what is the weather at my daughter’s recital coming up?The AI processing, calculated on-device if what they claim is true, is:

- the determination of who your daughter is

- What is a recital? An event? Are there any upcoming calendar events that match this concept?

- Is the “daughter” associated with this event by description or invitation? Yes? OK, what’s the address?

- Submit zip code of recital calendar event involving the kid to the weather API, and churn out a reply that includes all this information…

Well {Your phone contact name}, it looks like it will {remote weather response} during your {calendar event from phone} with {daughter from contacts} on {event date}.That is the idea between on-device and cloud processing. The phone already has your contacts and calendar and does that work offline rather than educating an online server about your family, events and location, and requests the bare minimum from the internet, in this case nothing more than if you opened the weather app yourself and put in a zip code.

meanwhile i just want cheap gpus for my bideogames again

You can buy them new for somewhat reasonable prices. What people should really look at is used 1080ti’s on ebay. They’re going for less than $150 and still play plenty of games perfectly fine. It’s the budget PC gaming deal of the century.

it probably the best performance per dollar u can get but a lot of modern games are unplayable on it.

Lot of those games are also hot garbage. Baldur’s Gate 3 may be the only standout title of late where you don’t have to qualify what you like about it.

I think the recent layoffs in the industry also portend things hitting a wall; games aren’t going to push limits as much as they used to. Combine that with the Steam Deck-likes becoming popular. Those could easily become the new baseline standard performance that games will target. If so, a 1080ti could be a very good card for a long time to come.

You’re misunderstanding the issue. As much as “RTX OFF, RTX ON” is a meme, the RTX series of cards genuinely introduced improvements to rendering techniques that were previously impossible to pull-off with acceptable performance, and more and more games are making use of them.

Alan Wake 2 is a great example of this. The game runs like ass on 1080tis on low because the 1080ti is physically incapable of performing the kind of rendering instructions they’re using without a massive performance hit. Meanwhile, the RTX 2000 series cards are perfectly capable of doing it. Digital Foundry’s Alan Wake 2 review goes a bit more in depth about it, it’s worth a watch.

If you aren’t going to play anything that came out after 2023, you’re probably going to be fine with a 1080ti, because it was a great card, but we’re definitely hitting the point where technology is moving to different rendering standards that it doesn’t handle as well.

So here’s two links about Alan Wake 2.

First, on a 1080ti: https://youtu.be/IShSQQxjoNk?si=E2NRiIxz54VAHStn

And then on a Rog Aly (which I picked because it’s a little more powerful than the current Steam Deck, and runs native Windows): https://youtu.be/hMV4b605c2o?si=1ijy_RDUMKwXKQQH

The Rog seems to be doing a little better, but not by much. They’re both hitting sub 30fps at 720p.

My point is that if that kind of handheld hardware becomes typical, combined with the economic problems of continuing to make highly detailed games, then Alan Wake 2 is going to be an abberation. The industry could easily pull back on that, and I welcome it. The push for higher and higher detail has not resulted in good games.

I own a 1080ti and there was recently a massive update to Allan Wake 2 that made it more playable on pascal GPUs. Digital foundry did a video on it: http://youtu.be/t-3PkRbeO8A

I don’t know of any current game that can’t run at least 1080p30fps on 1080ti. But of course my knowledge is not exhaustive.

I wouldn’t expect every “next-gen” game to get the same treatment as Alan Wake 2 going forward. But we’re 4 years into the generation and there has probably been less than 10 games that were built to take full advantage of modern console hardware. My 1080ti has got a few more good years in it.

Can you reference those instructions more specifically

not in my country lol. getting used cards were already the norm before, for a while you could literally only get used ones for a good price on aliexpress.

and now our gvmnt imposed 100% tax on anything from china, so its really just not affordable.

Could import from Taiwan instead?

not really a good way. we can mostly only afford used and theres no outlet to buy used from taiwan that ships internationally in a trustworthy way.

brand new will probably be taxed too anyway.

Meanwhile I dont think I have played more than 30minutes on my ps5 this year and its june, and I have definitely not played any minutes on the 1080 sitting in my PC…

Oh fuck scratch that I may have played about 2 hours of Dune Spice Wars

How are you finding dune? I watched a few let’s plays of the demo and it looked interesting…

Honestly, I don’t have an opinion on it, it didnt capture me like old world did last year, so probably not as good or I am just preferring more slow, thought out turn based stuff.

But mostly I am just kinda over gaming as a whole, I realized it’s mostly cheap dopamine chasing for me and I don’t really enjoy it.

Crack cocain is 10000x’s more rewarding and less of a come down when you realize you only spent 1/10th of the price of a console or gaming pc.

Nvidias being pretty smart here ngl

This is the ai gold rush and they sell the tools.

Yes that’s the meme.

They will eat massive shit when that AI bubble bursts.

I mean if LLM/Diffusion type AI is a dead-end and the extra investment happening now doesn’t lead anywhere beyond that. Yes, likely the bubble will burst.

But, this kind of investment could create something else. We’ll see. I’m 50/50 on the potential of it myself. I think it’s more likely a lot of loud talking con artists will soak up all the investment and deliver nothing.

bubbles have nothing to do with technology, the tech is just a tool to build the hype. The bubble will burst regardless of the success of the tech at most success will slightly delay the burst, because what is bursting isnt the tech its the financial structures around it.

It’s looking like a dead end. The content that can be fed into the big LLMs has already been done. New stuff is a combination of actual humans and stuff generated by LLMs. It then runs into an ouroboros problem where it just eats its own input.

I mostly agree, with the caveat that 99% of AI usage today just stupid gimmicks and very few people or companies are actually using what LLMs offer effectively.

It kind of feels like when schools got sold those Smart Whiteboards that were supposed to revolutionize teaching in the classroom, only to realize the issue wasn’t the tech, but the fact that the teachers all refused to learn and adapt and let the things gather dust.

I think modern LLMs should be used almost exclusively as an assistive tool to help empower a human worker further, but everyone seems to want an AI that you can just tell ‘do the thing’ and have it spit out a finalized output. We are very far from that stage in my opinion, and as you stated LLM tech is unlikely to get us there without some sort of major paradigm shift.

only to realize the issue wasn’t the tech

To be fair, electronic whiteboards are some of the jankiest piles of trash I’ve ever had to use. I swear to God you need to re-calibrate them every 5 minutes.

deleted by creator

I doubt it. Regardless of the current stage of machine learning, everyone is now tuned in and pushing the tech. Even if LLMs turn out to be mostly a dead end, everyone investing in ML means that the ability to do LOTS of floating point math very quickly without the heaviness of CPU operations isn’t going away any time soon. Which means nVidia is sitting pretty.

the WWW wasn’t a dead end but the bubble burst anyway. the same will happen to AI because exponential growth is impossible.

It means having a shot at getting a good gaming gpu for cheap

As far as I understand the tech, those things aren’t really interchangeable :(

No they won’t, this tech isn’t going to go away Even if it plateaus. All the gpus they make will still get used.

As far as I understand, the GPUs that LLMs use aren’t exactly interchangeable with your regular GPU. Also, no one needs that many GPUs for any traditional use cases.

Admittedly, I bought an Nvidia card for AI. I am part of the problem.

I don’t think it’s a problem, more like a situation. You are not doing anything wrong or stupid, just interested in something new and promising and have the resources to pursue it. Good for you, may you find gold.

Serious Question:

Why is Nvidia AI king and I see nothing of AMD for AI?

I’m an AI Developer.

TLDR: CUDA.

Getting ROCM to work properly is like herding cats.

You need a custom implementation for the specific operating system, the driver version must be locked and compatible, especially with a Workstation / WRX card, the Pro drivers are especially prone to breaking, you need the specific dependencies to be compiled for your variant of HIPBlas, or zLUDA, if that doesn’t work, you need ONNX transition graphs, but then find out PyTorch doesn’t support ONNX unless it’s 1.2.0 which breaks another dependency of X-Transformers, which then breaks because the version of HIPBlas is incompatible with that older version of Python and …

Inhales

And THEN MAYBE it’ll work at 85% of the speed of CUDA. If it doesn’t crash first due to an arbitrary error such as CUDA_UNIMPEMENTED_FUNCTION_HALF

You get the picture. On Nvidia, it’s click, open, CUDA working? Yes?, done. You don’t spend 120 hours fucking around and recompiling for your specific usecase.

Also, you need a supported card. I have a potato going by the name RX 5500, not on the supported list. I have the choice between three rocm versions:

- An age-old prebuilt, generally works, occasionally crashes the graphics driver, unrecoverably so… Linux tries to re-initialise everything but that fails, it needs a proper reset. I do need to tell it to pretend I have a different card.

- A custom-built one, which I fished out of a docker image I found on the net because I can’t be arsed to build that behemoth. It’s dog-slow, due to using all generic code and no specialised kernels.

- A newer prebuilt, any. Works fine for some, or should I say, very few workloads (mostly just BLAS stuff), otherwise it simply hangs. Presumably because they updated the kernels and now they’re using instructions that my card doesn’t have.

#1 is what I’m actually using. I can deal with a random crash every other day to every other week or so.

It really would not take much work for them to have a fourth version: One that’s not “supported-supported” but “we’re making sure this things runs”: Current rocm code, use kernels you write for other cards if they happen to work, generic code otherwise.

Seriously, rocm is making me consider Intel cards. Price/performance is decent, plenty of VRAM (at least for its class), and apparently their API support is actually great. I don’t need cuda or rocm after all what I need is pytorch.

Simple Answer:

Cuda

I think it’s in the pipeline. AMD has bought Xilinx, which builds FPGAs and already had some AI specific cores in their processors. I believe they’re developing that further and integrating it in their GPUs now.

So, AMD has started slapping the AI branding on to some of their products, but they haven’t leaned in to it quite as hard as Nvidia has. They’re still focusing on their core product line up and developing the actual advancements in chip design.

All of this to run a program that is essentially typing a question into Google and adding “Reddit” at the end of it.

They spent so much time disconnected from reality and trying to create artificial intelligence that they forgot regular intelligence exists

I thought this was a strange trolley problem at first

Well Nvidia sure did get rich quick

Nvidia and IA technology are both legit. Those companies need nvidia GPU for their development.